A significant confrontation has emerged between the United States Department of Defense (DOD) and the artificial intelligence company Anthropic. This clash raises crucial questions about the governance of military AI, specifically regarding who should set the boundaries for its use: the executive branch, private companies, or Congress along with the broader democratic process.

The conflict escalated when Defense Secretary Pete Hegseth reportedly issued a deadline for Anthropic’s CEO, Dario Amodei, to permit unrestricted DOD access to its AI systems. When Anthropic declined, the DOD designated the company as a supply chain risk, ordering federal agencies to phase out its technology. This marked a dramatic increase in tensions between the two parties.

Anthropic has made it clear that it will not allow its models to be used for domestic surveillance of U.S. citizens or for fully autonomous military targeting. Secretary Hegseth criticized what he termed “ideological constraints” within commercial AI systems, asserting that the government should dictate lawful military applications, not the vendors. In a recent speech at SpaceX, he stated, “We will not employ AI models that won’t allow you to fight wars.”

The core of this conflict appears to be a procurement disagreement. In a market economy, the military selects the products and services it requires, while companies determine what they are willing to offer. If a product does not meet operational needs, the DOD can choose another vendor. Conversely, if a company believes that certain uses of its technology are unsafe or misaligned with its values, it can opt not to sell.

A coalition of firms has even signed an open letter against weaponizing general-purpose robots, illustrating that companies can take a stand based on ethical considerations. However, the situation becomes more complex with the DOD’s decision to label Anthropic a “supply chain risk.” This designation is typically used for addressing genuine national security threats rather than punishing a company for not adhering to specific contractual terms. Secretary Hegseth declared that “effective immediately, no contractor, supplier, or partner that does business with the U.S. military may conduct any commercial activity with Anthropic.” This move is likely to face legal challenges but escalates the stakes beyond a simple contract dispute.

The implications of this disagreement extend into critical civil liberties issues, particularly concerning domestic surveillance. The U.S. operates under constitutional limitations when it comes to monitoring its citizens. A company’s refusal to permit its tools to support domestic surveillance aligns with longstanding democratic principles. While the DOD does not explicitly intend to use technology for unlawful surveillance, it argues that compliance with the law should be the government’s responsibility, rather than requiring vendors to embed legal constraints into their products.

Anthropic’s position includes significant investment in training its systems to reject high-risk tasks, including surveillance assistance. Thus, the dispute centers not only on current intentions but also on which authority should impose such constraints: the state through legal oversight or the developer through technical design.

The second key issue is the company’s opposition to fully autonomous military targeting, which presents additional complexities. The DOD has policies mandating human judgment in the use of force, with ongoing debates about autonomy in weapon systems within military and international forums. A private company may reasonably conclude that its technology is not yet reliable enough for certain battlefield applications, while the military may argue that such capabilities are essential for deterrence and operational effectiveness.

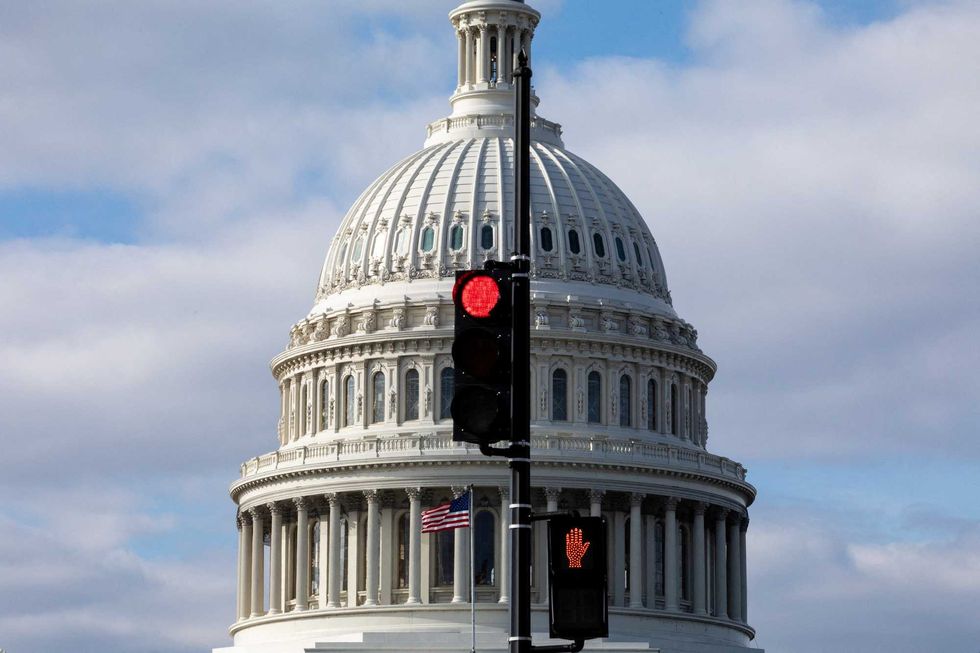

This fundamental disagreement illustrates a deeper concern: the governance of military AI should not be determined through ad hoc negotiations between a cabinet member and a corporate executive. The U.S. government must openly articulate its stance on specific AI capabilities deemed essential for national defense, facilitating a discussion in Congress that reflects these views in doctrine, oversight mechanisms, and statutory frameworks. The rules governing military AI should be transparent, clear to both companies and the public.

The U.S. distinguishes itself from authoritarian regimes by emphasizing that power functions within democratic institutions and legal constraints. This distinction diminishes if AI governance is settled primarily through executive ultimatums behind closed doors.

Strategically, if companies perceive that engaging with federal markets necessitates relinquishing all deployment conditions, some may choose to exit those markets. Others might compromise by weakening safeguards in order to remain eligible for government contracts. Neither outcome would enhance U.S. technological leadership.

While the DOD is justified in opposing potential “ideological constraints” that could impede lawful military operations, there is a difference between rejecting arbitrary restrictions and dismissing the role of corporate risk management in shaping deployment conditions. In high-risk domains such as aerospace and cybersecurity, contractors often impose safety standards and operational limitations as part of responsible commercialization. AI should not be seen as an exception to this practice.

Moreover, built-in safeguards can enhance operational effectiveness rather than hinder it. In many high-risk fields, multiple layers of oversight—such as internal controls, technical fail-safes, auditing mechanisms, and legal reviews—function together to ensure safety and accountability.

The DOD should maintain ultimate authority over lawful military use but can acknowledge that certain design-level guardrails might complement its existing oversight structures. Redundancy in safety systems can strengthen operational integrity.

Nonetheless, a company’s unilateral ethical commitments do not replace the need for public policy. When technologies impact national security, private governance has its limits. Decisions regarding surveillance authorities, autonomous weapons, and rules of engagement should reside within democratic institutions.

This situation highlights a pivotal moment in the governance of AI. As AI systems grow increasingly powerful, influencing intelligence analysis, logistics, cyber operations, and battlefield decision-making, they become too essential to be regulated solely by corporate policy or executive discretion.

The resolution lies not in empowering one side over the other but in reinforcing the institutions that mediate between them. Congress should clarify the legal boundaries for military AI use and investigate the adequacy of oversight mechanisms. The DOD must articulate a detailed doctrine for human control, auditing, and accountability. Civil society and industry should engage in structured consultations rather than sporadic confrontations.

If AI guardrails can be compromised through contractual pressures, they risk being treated as negotiable. However, if established in law, they can become stable expectations. Ultimately, democratic constraints on military AI use must reside in statutes and doctrines, rather than in private contract negotiations.